Detecting Web Attacks via 404 Errors in Your Logs

Oct 13, 2022

Oct 13, 2022

The art of log analysis is being able to make sense of raw data, from the bits of information that we know are

bad to those that appear benign but are just as rich with information. A great example of this is with web logs, they

are more rich with information than most realize and one often overlooked aspect are 404 warnings.

In this article we'll explore 404 warnings in your web access logs and how they can be used to identify potential security

issues.

For those not familiar the HTTP protocol, any time you visit a page that does not exist, your web server (Apache, NGINX, IIS, etc)

by default will generate a HTTP 404 not found code, generate a 404 page and log the results in its logging aparatus.

For example, if we visit a page that does not exist on Trunc.org, this is what is generated in the logs:

192.1.a.b - - [13/Sep/2022:05:05:13 +0000] "GET /doesnotexist HTTP/1.1" 404 5155 ...

This log entry whos shows the originating IP address (192.1.a.b), the date and time (Sep 13 at 5:05) the request was made, the file (/doesnotexist) being requested

and the HTTP status code - which in this instance is a 404 (for file not found).

Monitoring these logs can be helpful to DevSecOp teams, often providing insight into missing links, improperly removed resources or highlighting a potential misconfiguration

issue on a web server / web application.

Identifying New Attacks via HTTP 404 Web Logs

In addition to providing insights into missing resources, or misconfigurations, HTTP 404 codes can be an invaluable resource

to security teams.

They can be used to monitor what the attackers and bots are scanning for. Via these logs, teams can often gain insight into a

new campaign before the rest of the social world. They can highlight what campaigns are looking for on web servers or known weaknesses

in web applications. It is especially useful if you have multiple sites (and maybe a few honeypots to complement them) to help rule out

issues specific to one site.

Any time you see multiple 404 requests across your network, to multiple sites, and the same file, that is usually a very strong indicator that

the requests are coming from malicious campaigns. As researchers, we can use this to dive into specific areas to see what they might be after, in some

instances coordinating with organizations to take a closer look.

A great example of this is when we reported on how attackers were searching for and using the /.aws/credentials file to steal AWS credentials. We noticed that almost every site in our network had 404's for /.aws/credentials, which led us to research and report on our findings.

It can also be extremely helpful in identifying 0-days being exploited and scanned for in the wild.

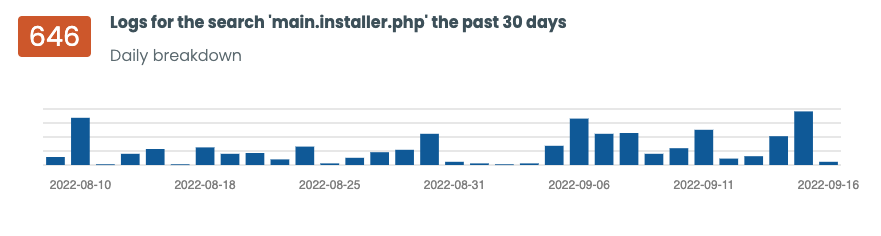

For example, the Duplicator WordPress plugin, a popular WordPlugin with over 1 million installs, had a file disclosure (and potential backup file disclosure) announced in August of 2022.

If the attacker queried the main.installer.php file of the plugin located here:

/wp-content/backups-dup-lite/dup-installer/main.installer.php?is_daws=1

It could display sensitive system information, allowing the attacker to download the website backup files.

Coincidently, without delay, we started seeing scans across our network looking for that file. Starting almost on the same day

of the disclosure.

All requests looked similar to this:

mysite 20.25.144.a 404 162 29/Aug/2022:06:28:40 +0000 "GET /sitepath/dup-installer/main.installer.php HTTP/2.0...

The 404's alone told us what we needed to know. It also helped us identify several backdoors, and their preferred placement across multiple WordPress plugins.

Here are the top scans for this month alone:

/wp-content/plugins/about.php

/wp-content/plugins/wp-dester/dester/wp-dester.php

/wp-content/plugins/wp-classic_editor/werdt/werd.php

/wp-content/plugins/wordpresss3cll/up.php

/wp-content/plugins/wordpresss3cll-1/up.php

/wp-content/plugins/wp-roilback/includes/roilback.php

/wp-content/plugins/wp-roilbask/includes/roilbask.php

/wp-content/plugins/wp-classic/wp-classic/wp-classic.php

/wp-content/plugins/wp-json-api-disable/wwdv.php

If you see any of these files, plugins, on your server, they are most likely malicious and we recommend initiating your incident response process. These are not valid plugins or files.

These are just a few examples of how log analysis can be helpful, useful, and how what we might believe to be benign (like 404 codes) are actually extremly powerful in

identifying vulnerability scanners, other pentesting tools, and active campaigns. that tend to be noisy on the 404 site.

Logging Guides

We love logs. In this section we will share some articles from our team to help you get better at logging.

Trunc Logging

Logging for fun and a good night of sleep.

- Real time search

- Google simple

- Cheap

- Just works

- PCI compliance

Latest Articles

Latest articles from our learning center.

- 2025-07-22Early Scans for CVE-2025-53771 (SharePoint Vulnerability) Detected

- 2025-06-03Investigating the 'slince_golden' WordPress Backdoor

- 2025-05-30Vulnerability Scanner Logs: WPScan

- 2025-05-29Web Scanning, Development Hygiene, and File Exposure Risks

- 2025-05-29Troubleshooting Remote Syslog with TCPDUMP

- 2025-05-29Logging basics: Syslog protocol in detail

Contact us!

Do you have an idea for an article that is not here? See something wrong? Contact us at support@noc.org

Tired of price gouging

- Clear pricing

- No need to guess

- Real people

- Real logging

Get

Started

Get

Started